内存分配从本质上来讲是1种空间管理算法,给你1块连续的空间,提供存储服务,那末你的空间管理跟分配要采取甚么样的算法才会比较高效?

Bump-the-Pointer是最简单的算法。HotSpot的MM白皮书是这么描写btp的,

That is, the end of the previously allocated object is always kept track of. When a new allocation request needs to be satisfied, all that needs to be done is to check whether the object will fit in the remaining part of the generation and, if so, to update the pointer and initialize the object.

我们只需要1个指针来记录可用空间的起始地址,收到分配要求,先检查可用空间是不是足够分配给这次要求,如果足够,则分配空间,并且更新指针;如果不够就需要进行垃圾回收了。我们再来看看HotSpot的SerialCollector是怎样使用btp的。

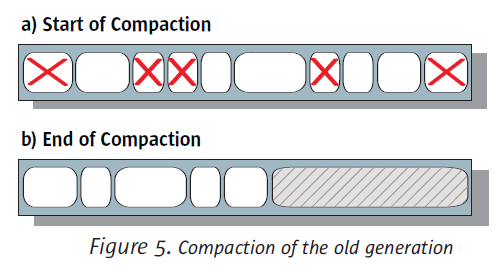

SerialCollector使用Mark-Sweep-Compact算法来对OldGen进行GC,对OldGen的分配,简单的bump the pointer,如果这时候候剩余的可用空间已不够,SerialCollector需要Stop-the-World,然后履行Mark-Sweep-Compact,即标记活着的对象,清除死去的对象,最后做sliding compaction,将活着的对象全部紧缩到1边,这样剩余的可用空间就能够继续简单的使用btp算法来分配空间了。

btp还有几个点说下,

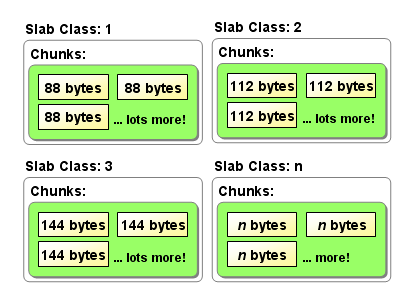

现在让我们来看另外1种采取分治策略的管理方法。我们可以将连续空间划分成1个个的组,每一个组内又包括若干个坑位,同组内每一个坑位的空间大小都是1样的,至于坑位的大小是可以根据使用情况来进行调优的。

只需要再保护每一个组的metadata,这样每次1有分配要求,先查看metadata就可以做到Best Fit。

Slab Allocator便是以上述方式工作的。与Bumb-the-Pointer相比,Slab不需要Stop-the-World来做GC,但是会造成1定的空间浪费,由于我们只能做到Best Fit。

Talk is cheap,下面来看看Memcached中的Slab实现。先看SlabClass的定义和初始化的方法,

typedef struct {

unsigned int size; /* sizes of items */

unsigned int perslab; /* how many items per slab */

void *slots; /* list of item ptrs */

unsigned int sl_curr; /* total free items in list */

unsigned int slabs; /* how many slabs were allocated for this class */

void **slab_list; /* array of slab pointers */

unsigned int list_size; /* size of prev array */

unsigned int killing; /* index+1 of dying slab, or zero if none */

size_t requested; /* The number of requested bytes */

} slabclass_t;void slabs_init(const size_t limit, const double factor, const bool prealloc) {

int i = POWER_SMALLEST - 1;

unsigned int size = sizeof(item) + settings.chunk_size;

mem_limit = limit;

if (prealloc) {

/* Allocate everything in a big chunk with malloc */

mem_base = malloc(mem_limit);

if (mem_base != NULL) {

mem_current = mem_base;

mem_avail = mem_limit;

} else {

fprintf(stderr, "Warning: Failed to allocate requested memory in"

" one large chunk.

Will allocate in smaller chunks

");

}

}

memset(slabclass, 0, sizeof(slabclass));

while (++i < POWER_LARGEST && size <= settings.item_size_max / factor) {

/* Make sure items are always n-byte aligned */

if (size % CHUNK_ALIGN_BYTES)

size += CHUNK_ALIGN_BYTES - (size % CHUNK_ALIGN_BYTES);

slabclass[i].size = size;

slabclass[i].perslab = settings.item_size_max / slabclass[i].size;

size *= factor;

if (settings.verbose > 1) {

fprintf(stderr, "slab class %3d: chunk size %9u perslab %7u

",

i, slabclass[i].size, slabclass[i].perslab);

}

}

power_largest = i;

slabclass[power_largest].size = settings.item_size_max;

slabclass[power_largest].perslab = 1;

if (settings.verbose > 1) {

fprintf(stderr, "slab class %3d: chunk size %9u perslab %7u

",

i, slabclass[i].size, slabclass[i].perslab);

}

/* for the test suite: faking of how much we've already malloc'd */

{

char *t_initial_malloc = getenv("T_MEMD_INITIAL_MALLOC");

if (t_initial_malloc) {

mem_malloced = (size_t)atol(t_initial_malloc);

}

}

if (prealloc) {

slabs_preallocate(power_largest);

}

}slabs_init方法中可以看到我们上面所说的坑位大小的调优,Memcached是通过配置1个factor来实现,坑位的大小是按factor倍增长的。

需要注意这里的size是sizeof(item) + settings.chunk_size,item是Memcached所存储的数据,在memcache.h中定义。存储系统的数据结构设计又是另外一个可以深究的话题,后面再探讨。

typedef struct _stritem {

struct _stritem *next;

struct _stritem *prev;

struct _stritem *h_next; /* hash chain next */

rel_time_t time; /* least recent access */

rel_time_t exptime; /* expire time */

int nbytes; /* size of data */

unsigned short refcount;

uint8_t nsuffix; /* length of flags-and-length string */

uint8_t it_flags; /* ITEM_* above */

uint8_t slabs_clsid;/* which slab class we're in */

uint8_t nkey; /* key length, w/terminating null and padding */

/* this odd type prevents type-punning issues when we do

* the little shuffle to save space when not using CAS. */

union {

uint64_t cas;

char end;

} data[];

/* if it_flags & ITEM_CAS we have 8 bytes CAS */

/* then null-terminated key */

/* then " flags length

" (no terminating null) */

/* then data with terminating

(no terminating null; it's binary!) */

} item;每个SlabClass里面有1组Slab,每一个Slab又拆分为slabclass.perslab个大小为slabclass.size的坑位。

static int grow_slab_list (const unsigned int id) {

slabclass_t *p = &slabclass[id];

if (p->slabs == p->list_size) {

size_t new_size = (p->list_size != 0) ? p->list_size * 2 : 16;

void *new_list = realloc(p->slab_list, new_size * sizeof(void *));

if (new_list == 0) return 0;

p->list_size = new_size;

p->slab_list = new_list;

}

return 1;

}

static void split_slab_page_into_freelist(char *ptr, const unsigned int id) {

slabclass_t *p = &slabclass[id];

int x;

for (x = 0; x < p->perslab; x++) {

do_slabs_free(ptr, 0, id);

ptr += p->size;

}

}

static int do_slabs_newslab(const unsigned int id) {

slabclass_t *p = &slabclass[id];

int len = settings.slab_reassign ? settings.item_size_max

: p->size * p->perslab;

char *ptr;

if ((mem_limit && mem_malloced + len > mem_limit && p->slabs > 0) ||

(grow_slab_list(id) == 0) ||

((ptr = memory_allocate((size_t)len)) == 0)) {

MEMCACHED_SLABS_SLABCLASS_ALLOCATE_FAILED(id);

return 0;

}

memset(ptr, 0, (size_t)len);

split_slab_page_into_freelist(ptr, id);

p->slab_list[p->slabs++] = ptr;

mem_malloced += len;

MEMCACHED_SLABS_SLABCLASS_ALLOCATE(id);

return 1;

}使用FreeList,也就是slabclass.slots来管理这些可用的坑位,

static void do_slabs_free(void *ptr, const size_t size, unsigned int id) {

slabclass_t *p;

item *it;

assert(((item *)ptr)->slabs_clsid == 0);

assert(id >= POWER_SMALLEST && id <= power_largest);

if (id < POWER_SMALLEST || id > power_largest)

return;

MEMCACHED_SLABS_FREE(size, id, ptr);

p = &slabclass[id];

it = (item *)ptr;

it->it_flags |= ITEM_SLABBED;

it->prev = 0;

it->next = p->slots;

if (it->next) it->next->prev = it;

p->slots = it;

p->sl_curr++;

p->requested -= size;

return;

}使用简单的互斥锁来应对并发情况。(为何不是锁SlabClass而是需要直接锁住全部Slab Allocator?)

void *slabs_alloc(size_t size, unsigned int id) {

void *ret;

pthread_mutex_lock(&slabs_lock);

ret = do_slabs_alloc(size, id);

pthread_mutex_unlock(&slabs_lock);

return ret;

}最后,上面说的都是逻辑结构,下面贴1下我整理的物理结构便于理解,其中slabclass[0].perslab=3。

mem_base slabclass[0].slots

| |

+---+---+---+---+---+---+---+---+---+---+

| X | X | X | X | | | | X | | |

+---+---+---+---+---+---+---+---+---+---+

| | |

slabclass[0] slabclass[1] slabclass[0]

.slab_list[0] .slab_list[0] .slab_list[1]Buddy Allocator采取的策略与Slab类似,在我理解它只是1种特殊的Slab Allocator,坑位的大小是2的次幂,这样就致使了Buddy的空间浪费要比Slab严重很多,例如1个33K的分配要求会被分配到64K的空间。说到底其实还是要根据实际使用情况来调剂坑位大小,减少空间的浪费。