iOS8 Core Image In Swift:自动改善图像以及内置滤镜的使用

iOS8 Core Image In Swift:更复杂的滤镜

iOS8 Core Image In Swift:人脸检测以及马赛克

iOS8 Core Image In Swift:视频实时滤镜

class ViewController: UIViewController , AVCaptureVideoDataOutputSampleBufferDelegate {

var captureSession: AVCaptureSession!

var previewLayer: CALayer!

......

| 除此之外,我还对VC实现了AVCaptureVideoDataOutputSampleBufferDelegate协议,这个会在后面说。 要使用AV框架,必须先引入库:import AVFoundation |

override func viewDidLoad() {

super.viewDidLoad()

previewLayer = CALayer()

previewLayer.bounds = CGRectMake(0, 0, self.view.frame.size.height, self.view.frame.size.width);

previewLayer.position = CGPointMake(self.view.frame.size.width / 2.0, self.view.frame.size.height / 2.0);

previewLayer.setAffineTransform(CGAffineTransformMakeRotation(CGFloat(M_PI / 2.0)));

self.view.layer.insertSublayer(previewLayer, atIndex: 0)

setupCaptureSession()

}

func setupCaptureSession() {

captureSession = AVCaptureSession()

captureSession.beginConfiguration()

captureSession.sessionPreset = AVCaptureSessionPresetLow

let captureDevice = AVCaptureDevice.defaultDeviceWithMediaType(AVMediaTypeVideo)

let deviceInput = AVCaptureDeviceInput.deviceInputWithDevice(captureDevice, error: nil) as AVCaptureDeviceInput

if captureSession.canAddInput(deviceInput) {

captureSession.addInput(deviceInput)

}

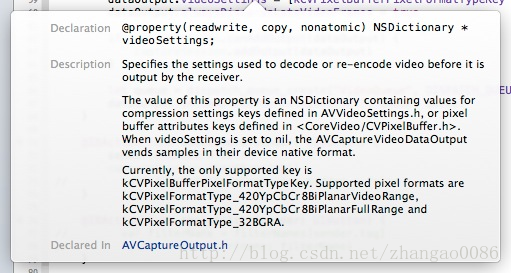

let dataOutput = AVCaptureVideoDataOutput()

dataOutput.videoSettings = [kCVPixelBufferPixelFormatTypeKey : kCVPixelFormatType_420YpCbCr8BiPlanarFullRange]

dataOutput.alwaysDiscardsLateVideoFrames = true

if captureSession.canAddOutput(dataOutput) {

captureSession.addOutput(dataOutput)

}

let queue = dispatch_queue_create("VideoQueue", DISPATCH_QUEUE_SERIAL)

dataOutput.setSampleBufferDelegate(self, queue: queue)

captureSession.commitConfiguration()

}

从这个方法开始,就算正式开始了。

我们现在完成一个session的建立过程,但这个session还没有开始工作,就像我们访问数据库的时候,要先打开数据库---然后建立连接---访问数据---关闭连接---关闭数据库一样,我们在openCamera方法里启动session:

@IBAction func openCamera(sender: UIButton) {

sender.enabled = false

captureSession.startRunning()

}

optional func captureOutput(captureOutput: AVCaptureOutput!, didOutputSampleBuffer sampleBuffer: CMSampleBuffer!, fromConnection connection: AVCaptureConnection!)

这个回调就可以了:

func captureOutput(captureOutput: AVCaptureOutput!,

didOutputSampleBuffer sampleBuffer: CMSampleBuffer!,

fromConnection connection: AVCaptureConnection!) {

let imageBuffer = CMSampleBufferGetImageBuffer(sampleBuffer)

CVPixelBufferLockBaseAddress(imageBuffer, 0)

let width = CVPixelBufferGetWidthOfPlane(imageBuffer, 0)

let height = CVPixelBufferGetHeightOfPlane(imageBuffer, 0)

let bytesPerRow = CVPixelBufferGetBytesPerRowOfPlane(imageBuffer, 0)

let lumaBuffer = CVPixelBufferGetBaseAddressOfPlane(imageBuffer, 0)

let grayColorSpace = CGColorSpaceCreateDeviceGray()

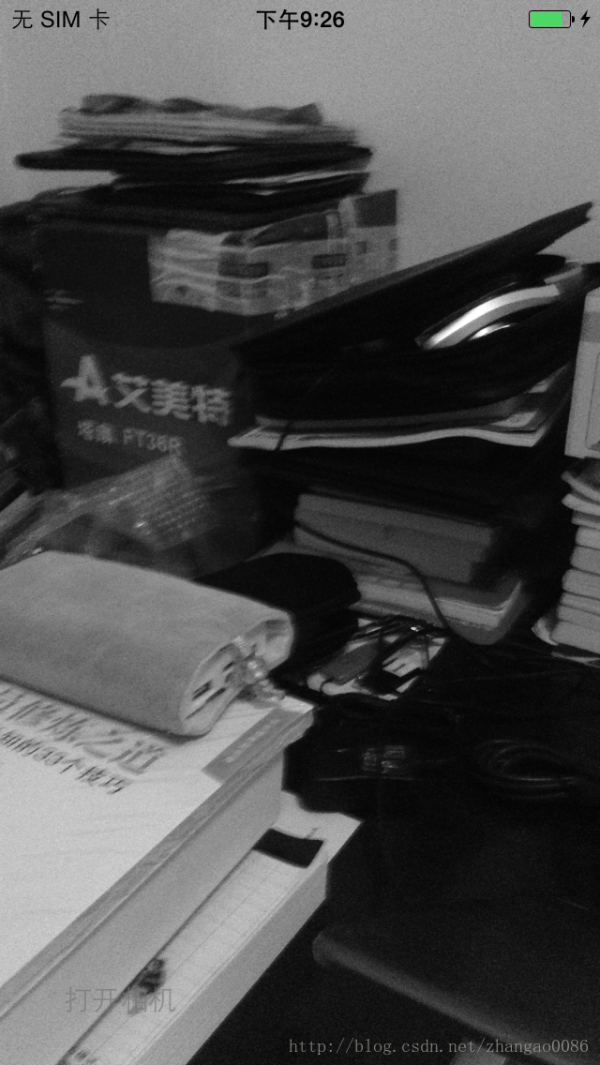

let context = CGBitmapContextCreate(lumaBuffer, width, height, 8, bytesPerRow, grayColorSpace, CGBitmapInfo.allZeros)

let cgImage = CGBitmapContextCreateImage(context)

dispatch_sync(dispatch_get_main_queue(), {

self.previewLayer.contents = cgImage

})

}

var filter: CIFilter!

lazy var context: CIContext = {

let eaglContext = EAGLContext(API: EAGLRenderingAPI.OpenGLES2)

let options = [kCIContextWorkingColorSpace : NSNull()]

return CIContext(EAGLContext: eaglContext, options: options)

}()

| 实际上,通过contextWithOptions:创建的GPU的context,虽然渲染是在GPU上执行,但是其输出的image是不能显示的, 只有当其被复制回CPU存储器上时,才会被转成一个可被显示的image类型,比如UIImage。 |

kCVPixelFormatType_420YpCbCr8BiPlanarFullRange

替换为

kCVPixelFormatType_32BGRA

再把session的回调进行一些修改,变成我们熟悉的方式,就像这样:

func captureOutput(captureOutput: AVCaptureOutput!,

didOutputSampleBuffer sampleBuffer: CMSampleBuffer!,

fromConnection connection: AVCaptureConnection!) {

let imageBuffer = CMSampleBufferGetImageBuffer(sampleBuffer)

// CVPixelBufferLockBaseAddress(imageBuffer, 0)

// let width = CVPixelBufferGetWidthOfPlane(imageBuffer, 0)

// let height = CVPixelBufferGetHeightOfPlane(imageBuffer, 0)

// let bytesPerRow = CVPixelBufferGetBytesPerRowOfPlane(imageBuffer, 0)

生活不易,码农辛苦

如果您觉得本网站对您的学习有所帮助,可以手机扫描二维码进行捐赠

下一篇 cdq分治